“Human heartbeats can become kick drums, birdsong and whale calls can become melodies”: Exploring the new industry of AI-integrated hardware effects pedals

AI is making the jump from software to hardware with a plethora of smart effects pedals. Is it just another gimmick or will AI-based hardware finally legitimise the controversial technology?

Artificial intelligence is generally thought of as a software tool. This is especially true when it comes to its uses in music production. Whether that be as a mixing assistant in a plugin like iZotope’s Ozone or one of Sonible’s Smart products, an in-DAW chatbot such as Safari Audio’s Meaw: Assist, or a contentious generative music platform like Suno, AI tends to stay confined to the intangible digital world. That is, until now.

We’re standing on the precipice of a new era of music production, one that moves neural networks and large language models out of the walled laptop garden and into the real world via hardware devices that we interact with physically.

This is happening most notably in the form of effects pedals, with three high-profile releases from Roland, Polyend and newcomer Groundhog Audio in various stages of completion.

Will putting AI into physical units that we can bodily interact with legitimise this often rage-inducing technology?

AI in music production is controversial, to say the least. While new technology has often been met with no little degree of suspicion by musicians, with the synthesizer famously falling afoul of musician’s unions and samplers causing all kinds of copyright headaches for the music industry in the 1980s, AI feels different.

This could be because the AI takeover is not restricted to the world of music production.

Whereas music unions worried that analogue synths were going to replace musicians, AI actually seems to be doing it.

And not just musicians. Professions across the board are in danger, with a much-touted jobs apocalypse supposedly on its way. It’s no wonder that some people have a Luddite-like knee-jerk reaction to the term ‘AI’ - seeing any implementation of the tech as inherently bad.

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

Somewhere between AI-utopia and the future envisioned by the Terminator films lay reality and the most probable way forward. It will probably shake down more like the coming of computers did, replacing older jobs and tech but creating new opportunities. For most of us now, we couldn’t imagine making music without a computer or related electronic device. AI may fill a role like this within the next 10 years.

AI doesn’t have to be scary, as the three effects pedals profiled here demonstrate. All have been made with care and concern for human musicians, and are being presented as not replacements to creativity but tools to aid in music creation and production, just like the effects and instruments that we already use. But, does the AI-imbued tech really open any new doors?

Roland Project LYDIA

Roland recently announced its first AI-based commercial product, Melody Flip, but the company has been quietly experimenting with artificial intelligence for a few years now as part of its Roland Future Design Lab R&D department.

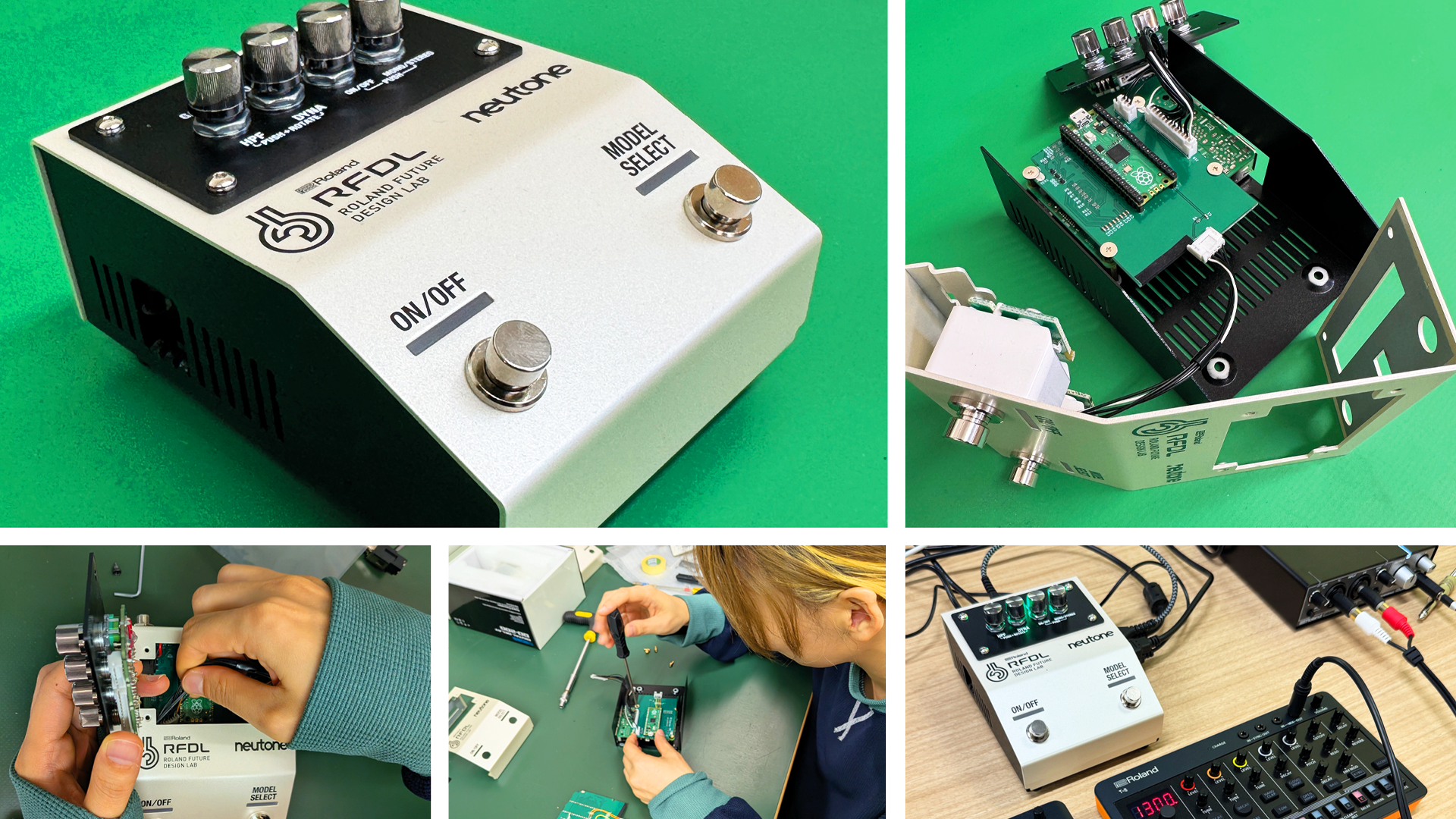

One result of this is Project LYDIA, a prototype hardware tabletop effects unit that puts AI inside the kind of stomp box that subsidiary BOSS is well known for.

Developed alongside Tokyo-based AI effects house Neutone, Project LYDIA uses a version of Neutone’s Morpho technology that allows users to apply the tonal qualities of a trained model onto an incoming audio signal, what is known as timbre-transfer.

As a blog post on Roland’s site explains, “Human heartbeats can become kick drums, birdsong and whale calls can become melodies, and your noisy coffee machine can become a breakbeat”. Morpho as it exists now also allows users to import self-created neural network models via the company’s training system, and future iterations of Project LYDIA may also include this.

While Morpho is fun to play with on its own (you can download a free version of the plugin from the Neutone site), having the technology inside a Roland-quality effects box makes it a new experience altogether.

We had the chance to try it out at Roland’s new headquarters in Hamamatsu, Japan, applying various neural models to a T-8 drum machine in real time.

The unexpected novelty of the juxtaposition between the source drums and the results, and the high audio quality, made us yelp with glee - much to the surprise of people trying to carry on a conversation around us. The results were so new, so novel, we wanted to spend hours playing with it. Surely the mark of useful music production technology?

Project LYDIA is just a prototype, what Roland is calling a ‘Technology Preview’. The Roland Future Design Lab doesn’t exist to make products but to figure out what may be possible for future releases. We can say, however, that it would be a shame if this technology didn’t ever come to market.

Being able to apply these unique sound models to an incoming signal live, and via a physical interface, certainly opens up all sorts of new sound-mangling vistas. Imagine playing your guitar live on stage and combining the post-distortion tone with a model trained on drums, or the sound of trains, or even your own voice. The possibilities are limitless.

Groundhog Audio OnePedal

A pedal that makes your guitar sound like a drum may be novel, but it may not always be practical.

For guitarists, trying to nail the tone from a favourite song may be more important. Groundhog Audio a new AI-based guitar effects company, is promising just that with its OnePedal, a hardware device that pairs with an app that aims to recreate the guitar tone of any song you upload to it.

The pedal, which surpassed its Kickstarter campaign goal more than 20 fold, acts as a physical conduit to the app on your phone.

There are stomp controls to advance through song profiles stored in the pedal, a knob to quickly scroll by hand, and a a tone switch to select different tones that may be present in a single song, such as lead, solo, or rhythm ones. The focus is on playing that tone in the moment. At least as far as the physical pedal is concerned.

The app is more powerful, and it’s where the tone creation happens. Either choose one of the reportedly 100,000-plus songs already in the database or upload one of your own.

The AI present in the app will use stem separation technology to isolate the guitar, analyse the sound, and then reconstruct it using an effects chain that you can further customise in terms of effects (chorus, distortion, echo, etc.) and virtual amplifiers and cabinets.

The AI reportedly understands music styles, guitar gear and production techniques, and also allows you to specify things like output and pickup type. Once you have the tone, you can then send it to the OnePedal for offline playing.

Groundhog Audio also recently announced a desktop version of the app that bypasses the pedal and lets you clone tone via your computer. The app is not available yet but will have a subscription payment model (owners of the OnePedal get a lifetime sub).

The OnePedal starts shipping to backers this spring (2026). How well it does what it claims remains to be seen.

Redditors are, unsurprisingly, skeptical. “This would be an impressive accomplishment,” says user LandosMustache, “but it’s akin to programming a full flagship multi-effects processor AND an AI model which can both parse guitar tones and use the multi-effects unit.”

Others point out that coming up with tone on your own is part of the fun, a personal creative journey that shouldn’t be relegated to a machine.

“Learning how all of this works, finding my own sound by looking for something specific or just by luck, it's the biggest part of the fun for me,” says Yan_HL. “I just find it sad to think that anything that will come out of it is already someone else’s sound.”

What is interesting, though, is no one is bemoaning it for being AI. It’s the corner-cutting that is concerning to them. But if users can take what they’ve learned from the tone-matching and apply it to something else, now they’ve learned a new skill.

As Ella Hafermalz, an associate professor at Vrije Universiteit Amsterdam, says in a Guardian article, “(AI) shouldn’t be a prison that holds you in, it should be a stepping stone - a way to get out, and do other things.”

Polyend Endless

Of the three pedals profiled here, the one that you can actually buy right now is Endless from Polyend. The name is apt, as it lets you do lots of things with effects. In fact, anything that you can imagine.

A combination of prompts and stomps, Endless is a hardware effects pedal with an aluminium enclosure, three knobs, and two foot switches. How these function depends on the algorithm that you’ve loaded. The algorithm could be a distortion. It could be a delay, or a looper, or a tremolo. Or something entirely new. Polyend provides a ready-made selection to play with but the real fun is in generating your own effects, either via code (if you have the ability) or text-based prompt.

Enter instructions in plain language in the desktop Playground app, which connects to the pedal via USB. Here you interact with a large language model that dips into Polyend’s collection of code to create what you ask for.

Recently, we tried out Endless alongside Polyend founder Piotr Raczyńsk when he visited us in our video studio, you can read our full exploration here or check out our findings in the below video:

“Playground builds on a large library of DSP algorithms our team has developed over many years,” the company said. “The AI layer interprets your prompt, then combines or adapts these building blocks to produce new effects.”

The AI will speak back, asking how you want to use the knobs and other functions, and will also crucially run tests to make sure your idea works.

As you’re engaging with a commercial AI, these interactions aren’t free and require the use of tokens. Buying the pedal gets you 2000, and you can buy more in batches of the same amount for $19.99. Polyend estimates that most effects will cost between one to five dollars to generate. You can share your effects with the community; downloading these (or Polyend’s) are free to do.

Along with the creativity of playing with effects, making your own is a kind of creativity in itself. While some may say that entering prompts is not creative, you’re still making choices in terms of design and user experience.

Of course, some of the sticky points of AI usage still apply.

All prompts require energy expenditure, and while Polyend itself is “100% energy self-sufficient thanks to solar power and heat pumps,” the LLM, on the other hand, is not.

There is also no mention of the specific AI company currently employed. “We … designed the infrastructure to stay flexible about AI providers, so we can evaluate different models over time and choose the best balance of quality, reliability, and cost as the technology evolves,” says the company.

While one hopes that Polyend is making good choices, if you prefer that your money not go to a specific AI provider because of military contracts, etc., the lack of disclosure makes that difficult to confirm.

Which ever way we cut it, it seems that the AI-incorporating hardware pedal market is certainly going to expand further as the decade progresses. On the strength of this trio of initial offerings, that’s no bad thing in our view.

As all of music technology’s great leaps forward have done, these applications open up new creative avenues we otherwise wouldn’t be able to access.

Time will tell, however, whether the widespread cultural AI-phobia will ward off those who might actually benefit from what these new innovations bring to the table.

Adam Douglas is a writer and musician based out of Japan. He has been writing about music production off and on for more than 20 years. In his free time (of which he has little) he can usually be found shopping for deals on vintage synths.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.