Meet Valery Vermeulen, the scientist and producer turning black holes into music

The Mikromedas project brings together theoretical physics and electronic composition by transforming data from deep space into sound

Scientific pursuits have often acted as the inspiration for electronic music, from Kraftwerk’s The Man-Machine through to Bjork’s Biophilia and the techno-futurist aesthetic of acts like Autechre and Aphex Twin.

Scientist, researcher, musician and producer Valery Vermeulen is taking this one step further with his multi-album project Mikromedas, which transforms scientific data gathered from deep space and astrophysical models into cosmic ambient compositions.

The first album from this project, Mikromedas AdS/CFT 001, runs data generated by simulation models of astrophysical black holes and extreme gravitational fields through custom-made Max/MSP instruments, resulting in a unique kind of aleatoric music that’s not just inspired by scientific discovery, but literally built from it.

Could you tell us a little about your background in both science and music?

“I started playing piano when I was six or seven years old. The science part came when I was like, 15 or 16, I think in my teenage years, I got to the library, and I stumbled upon a book, which had a part on quantum physics. I was very curious. And I think this is how the two got started.

“During my career path I always had the impression that I had to choose one or the other: music or mathematics, music or physics, theoretical physics. So in the beginning, I did a PhD in the mathematical part of superstring theory with the idea of doing research in theoretical physics. And I was really interested in the problem of quantum gravity - that's finding a theory that unifies quantum physics and general relativity theory.

“But at the same time, I was always making music, I started busking on the street, then I started playing in bands. Then, after my PhD, I switched, because I wanted to pursue more music. So I started at IPEM, that is the Institute for Psychoacoustics and Electronic Music in Belgium.”

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

What kind of work were you doing there?

“At IPEM I did research on music, artificial intelligence, and biofeedback. Out of that came the first project which combined the two and that is called EMO-Synth. With that project, with a small team, we try to build a system that can automatically generate personalised soundtracks that adapt themselves to emotional reaction.

"So the idea of the system is to have an AI assistant that can automatically generate a personalised soundtrack for a movie, specialised and made for you using genetic programming. That's a technique from AI.”

Could you tell us about the Mikromedas project?

“After EmoSynth, I wanted to do some more artistic stuff. That is how I stumbled upon Mikromedas, the project with which I’ve recorded the album. There's two series for the moment, and every series has a different topic. The first series started in 2014, as a commissioned work for The Dutch Electronic Arts Festival in Rotterdam.

"They wanted me to do something with space and sound. The question was: could I represent a possible hypothetical voyage from earth to an exoplanet near the centre of the Milky Way? Is it possible to evoke this using only sound, no visuals, that was the question. And this is how I stumbled upon data sonification for the first time.

The question was: could I represent a possible hypothetical voyage from earth to an exoplanet near the centre of the Milky Way?

“Basically, that's the scientific domain in which scientists are figuring out ways to use sound to convey data. Normally, you would look at data - as a data scientist, you look at your screen, you present the data on your screen, and you try to figure out structures in the data. But you can also do that using sound. It’s called multimodal representations. So if you both use your ears and your eyes, you can have a better understanding of data.

“With Mikromedas I got into that field, a very interesting scientific domain. Of course, artists have also started using it for creative purposes. It was a one-time concert that I made the whole show for, but it turned out that I played more and more concerts with that. And this is how the Mikromedas project got started.

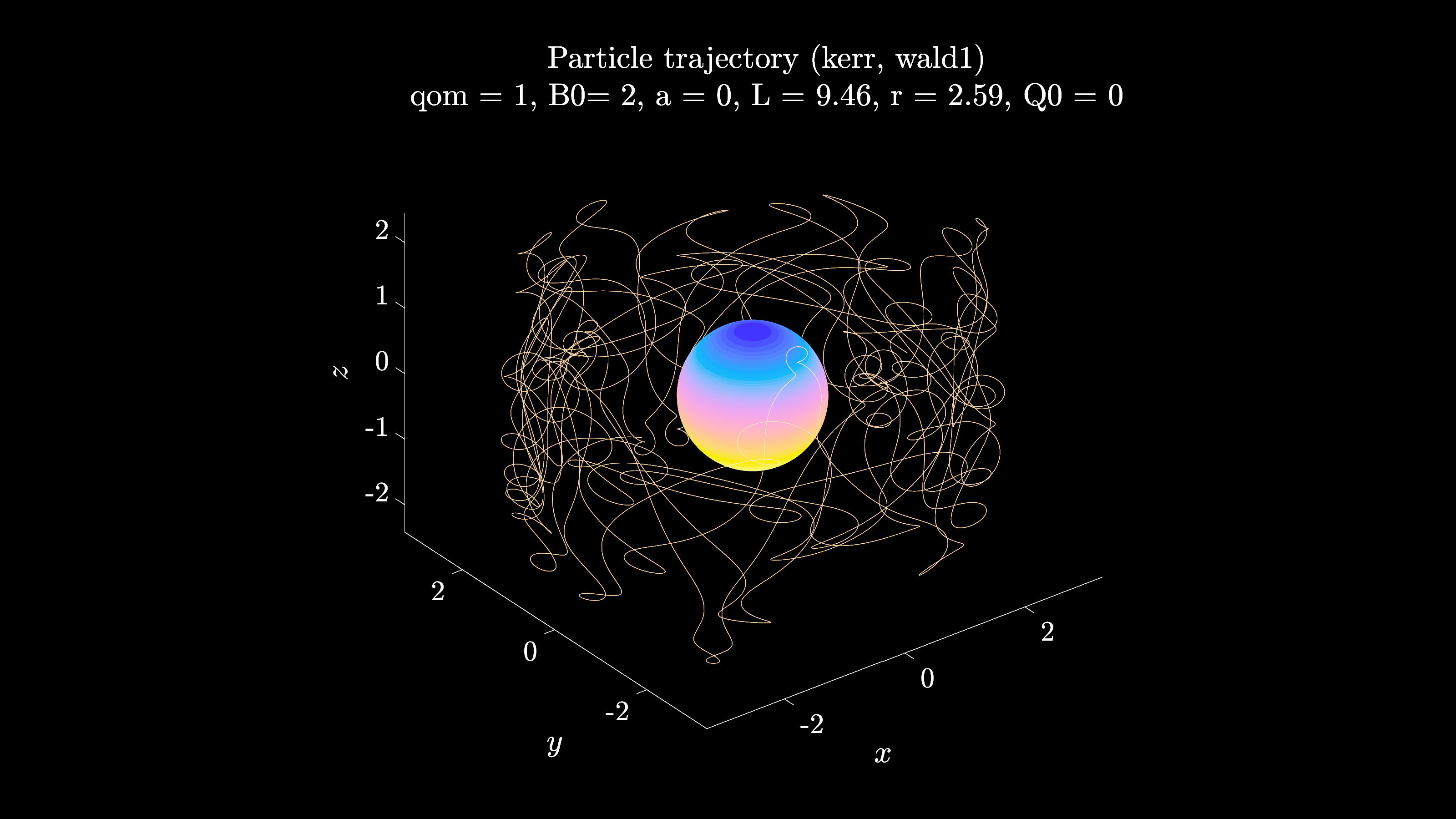

“After the first series, I wanted to dive even deeper into my fascination for mathematics and theoretical physics. I still had the idea of quantum gravity, this fascinating problem, in the back of my head. And black holes are a very hot topic - they are one of the classical examples where we can combine general relativity and quantum physics.

“The next step was, I needed to find ways to get data. I could program some stuff myself, but I also lacked a lot of very deep scientific knowledge and expertise. A venue here in Belgium put me in contact with Thomas Hertog, a physicist who worked with Stephen Hawking, and we did work on sonifications of gravitational waves, and I made a whole concert with that.

"From there, we made the whole album. It’s a bit of a circle, I think - at first the music and physics were apart from each other, these longtime fascinations that were split apart, and now they’ve come together again.”

What kinds of data are you collecting to transform into sound and music?

“If we’re talking from a musical perspective, I think the most fascinating data and the most close to music are gravitational wave data. Gravitational waves are waves that occur whenever you have two black holes, and they're too close to each other, they will swirl around each other, and they will merge to a bigger black hole. This is a super cataclysmic event. And because of this event, it will emit gravitational waves. If you encounter a gravitational wave, you become larger, smaller, thicker, or thinner. So it's sort of an oscillation that you would undergo.

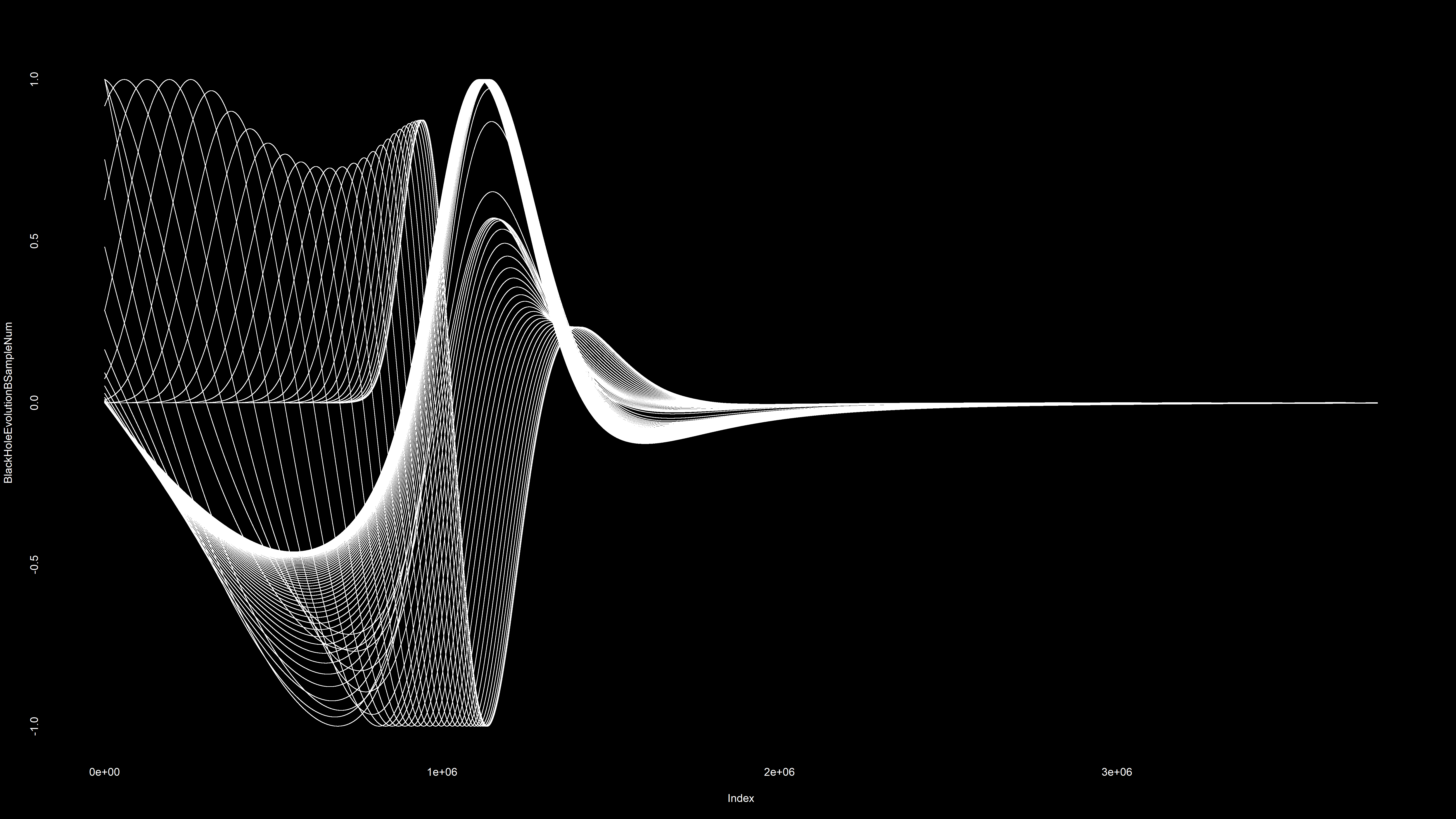

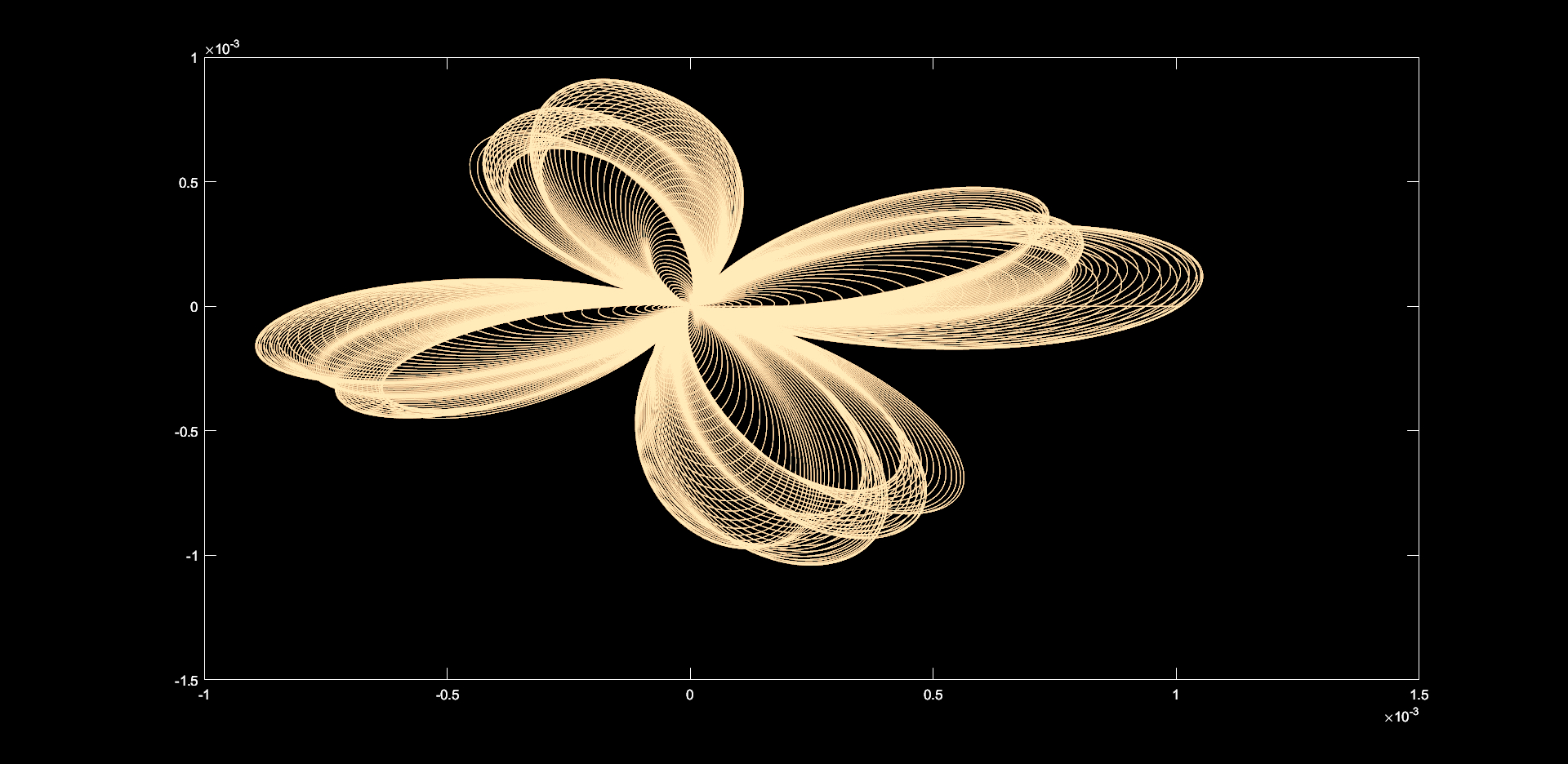

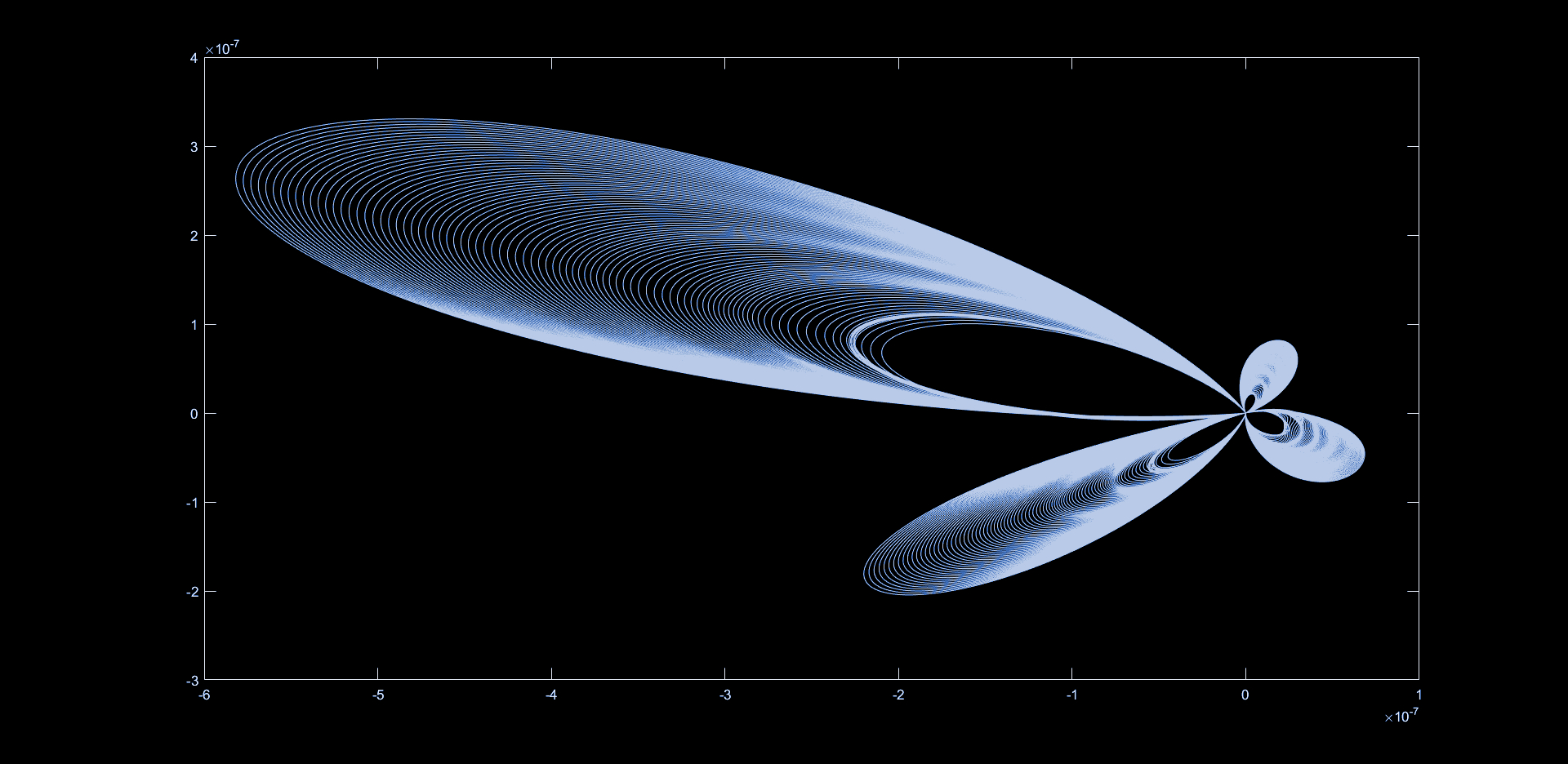

“What I discovered via the work with Thomas is that there's some simulations of gravitational waves that are emitted by certain scenarios, because you have different types of black holes, you can have different masses, etc. To calculate and to programme it, you need something which we call spherical harmonics. And those are three dimensional generalisations of sine wave functions.

I wear two hats. So one hat is the hat of the scientist, the physicist, and the other hat is the hat of a music producer

“And if you're into sound synthesis, I mean, if you're studying sounds, this is what we all learn about - the square wave is just a sum of all the overtones of a fundamental frequency, the sawtooth wave has all the overtones linearly decaying. And it's the same principle that holds with those generalisations of sine waves, those spherical harmonics. Using those, you can calculate gravitational waves in three dimensions, which is really super beautiful to watch. And this is what I did for one of the datasets.

“They say everything is waves. And it is, in a way - I mean, I don't like this New Age expression so much, saying “everything is connected” - but in some sort of way, vibrations are, of course, essential to music, but also to physics.”

How are you transforming that data into sounds we can hear?

“First I made 3D models. So these are STL files, 3D object files. And then, together with Jaromir Mulders, he’s a visual artist that I collaborate with, he could make a sort of a movie player. And so you can watch them in 3D, evolving. But then I thought, how on earth am I going to use this for music?

“The solution was to make two-dimensional intersections with two-dimensional planes. And then you have two-dimensional evolving structures. And those you can transform into one-dimensional evolutions and one dimensional number streams. Then you can start working with this data - that’s how I did it. Once you have those, it's a sort of a CV signal.

“I'm working in Ableton Live, using Max/MSP and Max for Live, and can easily connect those number streams to any parameter in Ableton Live, using the API in Max for Live, you can quite easily connect it to all the knobs you want. Another thing that I was using was quite a lot of wavetable synthesis. Different wave tables: Serum, Pigments, and the Wavetable synth from Ableton.”

How much of what we’re hearing on the album is determined by the data alone, and how much comes from your own aesthetic decision-making?

“I wear two hats. So one hat is the hat of the scientist, the physicist, and the other hat is the hat of a music producer, because I also studied music composition here at the Conservatory in Ghent. And I'm also teaching at the music production department there. It’s all about creativity. That's the common denominator, you know, because I always think it's difficult to say this is the science part, this is the musical part.

“In the more numerical part, what I would do is collect the data sets. You have all the different datasets, then you have to devise different strategies to sonify it, to turn all those numbers into sound clips, sound samples, you could say. These are sort of my field recordings, I always compare it to field recordings, but they are field recordings that come from abstract structures that give out data. I collect a whole bank of all these kinds of sounds.

“Next you design your own instruments, in something like Reaktor or Max/MSP, that are fed by the data streams. Once I have those two, I'm using those two elements to make dramatic compositions, abstract compositions. One theme of the album was to try to evoke the impression of falling into a black hole, something that is normally not possible, because you break all laws of physics, because we don't know what the physics looks like inside of a black hole, the region inside the event horizon.

Sometimes people ask me, why on earth make it so difficult? I mean, just make a techno track and release it. But no, I mean, everyone is different! And this is who I am

“Then I wear a hat as a music producer, because I want to make this into a composition. I was working before for a short time as a producer for dance music. So I want to have a kind of an evolution in the track. So how am I going to do that? I'm working with the sounds, I'm editing the sounds a lot with tools in Ableton, in Reaktor, and I also have some analogue synths here.

"So I have a Juno-106, a Korg MS-20. Sometimes I would just take my Juno, I put it into unison, you know, use the low pass filter, and then get a gritty, beautiful low analogue sound to it, mix it underneath to give an impression of this abstract theme.

“After that, once the arrangement is done, then there’s the mixing process. I did quite a lot of mixing, I think over a year, because I wanted the sound quality to be really very good. And I also started using new plugins, new software. And the whole idea was to make it sound rather analogue. I hope I managed to do the job with a record that did not make it sound too digital.”

Which plugins were you using to mix the material?

“Slate, of course. SoundToys, Ohmforce, I love Ohmforce plugins. Waves, we use a lot of Waves plugins. I also use the native plugins of Ableton. I started to appreciate them because before that I didn't know how to use them properly. I also have some hardware here. So I have a Soundcraft mixing table that I love a lot.

“The record was released on an international label, Ash International. It's a subsidiary of Touch. And Mike Harding, he let me know, the record is going to be mastered by Simon Scott. He's the drummer of Slowdive, the band Slowdive. So I was a little bit nervous to send a record to Simon, but he liked it a lot. So it's like, okay, I managed to do a mix that's okay. I was really happy about it.”

Aside from the scientific inspiration, what were the musical influences behind the project?

“Because the music is quite ambient, quite slow, Alva Noto is a big inspiration. Loscil, I was listening to a lot at the time. Biosphere, Tim Hecker. Also, at the same time, to get my head away a little bit, I tend to listen to other kinds of music when I’m doing this stuff. I was at the same time studying a lot of jazz, I’m studying jazz piano. I was listening to a lot of Miles Davis, Coltrane, Bill Evans, McCoy Tyner. I’m a big Bill Evans fan because of his crazy beautiful arrangements. Grimes is a big influence, and Lil Peep, actually - his voice is like, whoa.”

Do you have any plans to play the material live? How would you approach translating the project to a live performance?

“There are plans to play live. We're gonna play it as an audiovisual show. The visuals are produced by Jaromir Mulders, this amazing, talented visual artist from the Netherlands. Live, of course, I'm using Ableton Live. I have a lot of tracks, and basically splitting them out into a couple of different frequency ranges. So high, high-mid, low-mid, low and sub frequency ranges.

“Then I try to get them in different clips, loops, that make sense. And then I can remix the tracks in a live situation, I also add some effects. And I also add some new drones underneath. There's no keys or musical elements going into it. It's a very different setup than I was used to when I was still doing more melodic and rhythmic music.”

What’s in store for the next series of the project?

“There’s two routes, I think. Mikromedas is experimental, and I want it to remain experimental, because it’s just play. I’ve discovered something new, I think - it's finding a way to make a connection between the real hardcore mathematical theoretical physics, the formulas, and the sound synthesis and the electronic music composition. But with one stream that I'm looking at, I already have a new album ready. And that's to combine it with some more musical elements, just because I'm very curious.

“I think the Mikromedas project gave me a new way to approach making electronic music. Sometimes people ask me, why on earth make it so difficult? I mean, just make a techno track and release it. But no, I mean, everyone is different! And this is who I am. But going back a little bit towards the musical side, that's something that's really fascinating me.

“The other stream that I want to follow is to connect it even more with abstract mathematics. So my PhD was in the classification of infinite dimensional geometrical structures, which are important for superstring theory. The problem was always how can you visualise something that is infinitely dimensional. So you have to take an intersection with a finite dimensional structure to make sense out of it. But now I'm thinking that maybe I can try to make a connection with that and with sound, that's even more abstract than black holes. Making a connection with geometry, 3D, and sound using sonification.”

Mikromedas AdS/CFT 001 is out now on Ash International.

You can find out more about Valery's work by visiting his website or Instagram page.

- Mikromedas AdS/CFT 001 was made possible via a co-production with Concertgebouw Brugge (BE) and Baltan Laboratories (NL). Scientific aspect realized in collaboration with The University of Alabama (US), KU Leuven (BE) and the University of Antwerp. (BE)

I'm MusicRadar's Tech Editor, working across everything from product news and gear-focused features to artist interviews and tech tutorials. I love electronic music, and I love writing about the tools and techniques we use to make it.