The A to Z of computer music: C

Another instalment in our anthology of digital production terminology

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

You are now subscribed

Your newsletter sign-up was successful

We unleash a concentrated cavalcade of definitions, cluing you in on some of the finer points of MIDI, filters and microphones.

----------------------------------------------------------------------------------------------------------------------

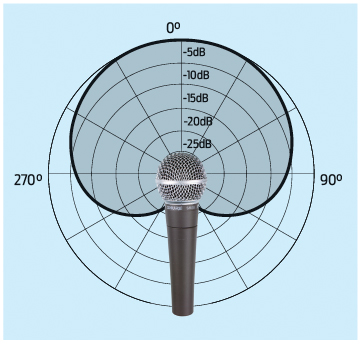

Cardioid (pickup pattern)

A microphone with a cardioid pickup pattern is more sensitive to sound coming from directly ahead than from behind it, as shown in the diagram above. In short, whatever you point the mic at comes out loudest, and whatever's behind it is most strongly rejected.

Carrier signal

In applications of modulation such as AM/FM or a vocoder, a carrier signal is modulated by another signal (the modulator,) resulting in an output that responds to both signals. In a vocoder, the carrier signal is the sound you want to effect (typically a synth sound,) while the modulator signal (usually a vocal) has the characteristics you want to apply to the carrier.

CC (MIDI)

A MIDI CC (Control Change aka Continuous Controller) is a type of MIDI data that's used to control parameters like modulation, volume, pan and sustain. When you see a MIDI controller with knobs and faders, those generally send out MIDI CCs. Once you've set up your software to receive the corresponding CCs, you can use the physical controls to manipulate things like volume or filter cutoff, and record your movements. MIDI CCs can also be programmed using the mouse.

CD (compact disc)

CDs themselves are increasingly obsolete, but CD-quality audio is still very relevant, being the standard quality for audio consumption. Specifically, we mean a 16-bit signal with a sample rate of 44,100Hz (44.1kHz).

Cents

A unit used to divide musical pitch. Semitone = 100 cents; Octave = 1200 cents.

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

Channel (audio)

Audio signals are described as having a certain number of channels. For instance, mono audio has just one channel; stereo has two; while surround sound audio might have 5.1 channels (five 'full range' and the '.1' containing low- frequency information only, for the subwoofer.)

Channel (mixer)

In your music program (DAW,) audio from various sources (eg, recorded audio tracks, virtual instruments, real instruments/mics connected to your audio interface) flows through channels in your DAW's virtual mixer, ultimately arriving at a destination (typically a channel called the 'master', which is fed to your audio interface's output.)

Each channel has its own settings, such as volume, panning, insert effects, send effects levels, and so on. Several related channels may be routed to a group channel (aka group bus) for broader control.

The terms 'track' and 'channel' are often used interchangeably. While they are technically different, they're so intrinsically related that it's rarely a source of confusion, and the context almost always makes the meaning clear.

Channel (MIDI)

MIDI data can be sent on one of 16 channels. This was very necessary in the bad old days of hardware because typical setups sent the same MIDI data to all devices. Your devices would then filter just the MIDI notes (and other data) intended for them, eg, bass on channel 1, lead on channel 2, etc.

In modern DAWs, you can usually send MIDI to specific devices, so you may not actually need to worry about channels at all.

As well as so-called Channel Messages (Note On, Note Off, Pitchbend, Control Change, etc), MIDI can also send System Messages, which all MIDI devices in the setup should heed.

Chorus

This effect duplicates the audio signal, then delays one version by between 15 and 35 miliseconds, modulating the time/tuning of the delayed signal to give an effect similar to unison, where multiple instruments play the same thing. Chorus can be thickened further by using more of these delayed voices, each with slightly different delay, modulation and panning values.

Clicks/pops

Digital clicks and pops can occur whenever there is an abrupt change in the signal level. This may happen if you cut the start (or end) of a piece of audio at a point where the waveform is not at or near-zero. When played, the signal will jump immediately from zero to the waveform's starting value, sometimes resulting in an audible click. The solution is to make your cuts at zero- crossing points, and/or to use short fades and crossfades when editing audio.

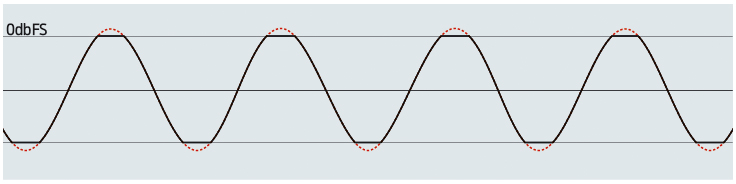

Clipping

If you've ever tried to push digital audio to its loudest, you'll have heard the distorted sound of the signal 'clipping'.

An audio interface can reproduce a maximum signal amplitude of 0dBFS (dB Full Scale). If the signal is pushed louder than this on your DAW's master channel, the data for the top and bottom parts of it will be 'clipped' off and lost - as pictured in the diagram above.

The same happens when audio is saved to an integer format such as 8-bit, 16-bit or 24-bit. Floating point formats (eg, 32-bit float) can store data above 0dBFS, but it would still clip upon playback unless you reduce its level first.

While individual audio tracks in a project may not be so loud as to clip, multiple tracks played together may result in a rise in level to the point of clipping. Clipping may occur momentarily, as a fast, high peak that we may not always notice. Fortunately, most DAWs indicate clipping with a red light in the mixer or transport. Clipping is commonly prevented using a brickwall limiter.

Comb filter

When a signal is mixed with a slightly delayed version of itself, the result is a cancellation of certain frequencies at regular intervals due to phase interaction. For example, delaying the signal by 1ms will create frequency 'dips' or 'notches' at 500Hz, 1500Hz, 2500Hz, 3500Hz and so on, creating the spectrum's resemblance to a comb. The effect can be intensified by applying feedback to the delay.

Chord

Two or more notes played at the same time.

Combo input

Audio interfaces may be furnished with XLR inputs, jack inputs or a mixture of the two. A combo input does exactly as you'd expect - it allows you to insert either of the two into one socket, though not at the same time, of course.

Compression (codec)

Audio file formats like WAV/AIFF can offer pristine sound quality, but they can also be very large. For convenience, audio is compressed into a smaller file size using codecs such as the ubiquitous MP3 and the free-to-use Vorbis (aka Ogg Vorbis.)

These aim to remove parts of an audio signal that listeners would - in theory - not be able to hear anyway, thereby reducing the space required to store the audio.

Naturally, the resulting decoded audio is not the same as the audio that went in; we call this lossy, as opposed to the lossless nature of WAV/AIFF.

In practice, this aggressive removal of parts of the audio signal can be audible, and the quality of the compressed audio depends mainly on the target bitrate (measured in kbps.)

A 128kbps MP3 is easy to spot, whereas a 320kbps can be practically indistinguishable from the WAV source. Beware of using these files for music-making, though, as processing can expose their hidden lack of quality.

Compressor

One of the most important audio processors to get your head round. Music is a mixture of quiet and loud parts (dynamics) and, in simple terms, a compressor will act to reduce the difference between them, reducing the dynamic range.

Use compression to:

- Reign in a sloppy performance, bringing the levels of the notes to similar levels

- Add more perceived power to a signal

- 'Glue together' an entire mix or a sub-group of

- tracks such as those of a drum kit

For more on the finer points of compression, check out Computer Music 170's huge compression feature. Or for more background on dynamics processing, read the ultimate guide to effects: dynamics.

Condenser microphone

Rather than the moving magnetic parts of a dynamic microphone, a condenser (sometimes called a capacitor) microphone uses two charged electric plates to create an electrical signal from sound. While generally more expensive than a dynamic mic, a condenser performs better at higher frequencies, providing a lighter, airier sound. Note that condenser mics must be used with a mixer or audio interface capable of providing phantom power of 48V.

Convolution

Convolution allows us to take a sampled 'snapshot' of a process (ie, an effect) and apply its characteristic sound to another signal. Think of it as a sort of sampler, but one that samples an effect rather than actual sounds.

Note that convolution only works fully for so-called linear processes; non-linear aspects such as distortion, dynamic response or modulation will not be captured. Typical uses of convolution are for emulating speaker cabinets, EQ curves and the reverb of actual rooms. A captured 'snapshot' is called an impulse response.

Core audio

The under-the-hood audio system in Mac OS X and iOS, this provides a built-in solution for smooth, low-latency audio playback, MIDI, processing, etc.

CPU

The CPU (Central Processing Unit) is your computer's calculating brain, often referred to as the 'processor'. The rate at which the CPU can perform calculations is given in GHz (billions per second.) Most modern CPUs consist of a number of 'cores', essentially duplicating the processing circuitry to allow multiple calculations to be performed at once.

Piling on too many plugins can tax your CPU, often indicated in your DAW by a CPU meter. If you max out your CPU, you can expect the audio to break up and stutter as the computer fails to keep up. You can alleviate the situation by disabling some of the plugins (just bypassing them doesn't always work) or freezing particularly intensive tracks.

Crossfade

Just as the end of an audio sample can be faded in or out, two audio samples can be 'crossfaded' together, bringing the volume of the first down as the volume of the second rises. This is good for seamless looping of samples, avoiding clicks and pops that could occur between two parts.

Crossover

Splits a signal into two or more frequency bands that can then be processed separately before being recombined. The split occurs around a designated crossover frequency.

Cutoff/centre frequency

The frequency at which a filter will come into effect. For instance, take the example of a high-pass filter that has been set to 'cut off' at 800Hz. Regardless of how steep the filter's slope is, the cutoff frequency of 800Hz is the frequency at which the signal has been reduced by -3dB.

Computer Music magazine is the world’s best selling publication dedicated solely to making great music with your Mac or PC computer. Each issue it brings its lucky readers the best in cutting-edge tutorials, need-to-know, expert software reviews and even all the tools you actually need to make great music today, courtesy of our legendary CM Plugin Suite.