The A to Z of computer music: I

Our dictionary of music making definitions reaches the letter I

For those illiterate in the industry lingo, we're here to issue some indispensable information on the ins and outs of the tools of the trade.

iLOK

PACE's iLok copy protection system is a USB dongle that stores user licences for applications and plugins by any developer choosing to implement the system. While the latest version, iLok 2, has proved secure (ie, has not been cracked), recent serious (but now seemingly resolved) problems with an update to its new License Manager software have irritated both users and developer partners alike.

Beyond that, the concept of having to keep a USB key connected to your computer in order to use software that you've paid for is always going to be controversial, although the method's portability between systems is a definite plus.

Image/imaging

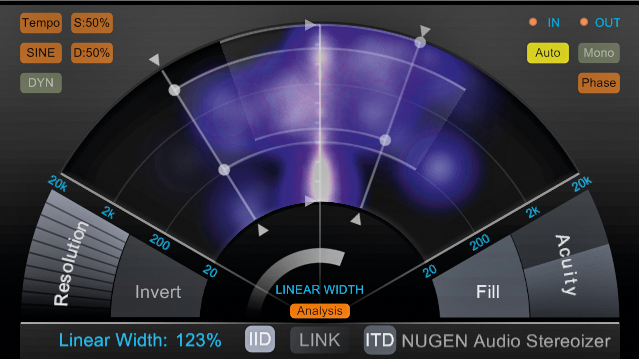

The arrangement and balance of sounds in a stereo panorama from left to right and - less literally - 'front' to 'back' is known as the stereo image. While placing signals between the left and right channels of a stereo pair is (generally speaking) a simple matter of adjusting their pan pots in the mixer and/or applying effects plugins with stereo positioning controls (reverb, delay, etc), working in a sense of perceived depth is a little trickier. There are numerous plugins on the market designed to help with imaging, often utilising mid/side techniques and psychoacoustic processing.

Input

The entry point on one device (a mixer channel or plugin effect, say), designed to accept the output signal from another device (eg, an instrument or microphone). Inputs can be virtual or physical - plugging a microphone into an audio interface is done via a hardware input, for example, while connecting the resultant output from the audio interface to a reverb plugin in your DAW is done via a virtual input (an insert point - see below - or effects send).

Inputting audio from the outside world into the software domain inevitably involves a degree of processing latency, which is simply the delay introduced by your computer and audio interface converting the analogue signal into a digital data stream and back again, and the processing that happens while the signal is inside the computer.

In order to enable monitoring of the input signal with practically no delay, many audio interfaces feature 'direct monitoring', which duplicates the input signal, sending one to the computer for recording (delayed as described) and the other directly to the interface's monitor outputs for latency-free listening, but without any of the processing offered by the computer (plugin effects).

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

Insert

A point in any mixer channel (hardware or software) at which the input and output of an effects or dynamics processor are connected in order to route 100% of the channel's input signal through it for processing. In a DAW mixer, insert slots give access to your menu of plugin effects, several of which can be loaded into a channel at once, processing the signal in top-down series.

Although send effects are considered the alternative to insert effects, the auxiliary channel used for the send/return loop is in fact just another mixer channel with effects inserted into it, the difference being that, rather than that channel being connected to a single track in a multitrack project, it's instead capable of receiving parallel user-adjustable input from multiple channels at once.

Instrument

An instrument is anything used to make musical sounds, of course, but in the context of computer music, the word is generally prefixed with 'virtual'. The biggest revolution in music technology of the last 15 years has been the proliferation of increasingly powerful and affordable software instruments.

Broadly speaking, there are two types of virtual instrument: synthesisers and sample players. Synthesisers use algorithmically modeled oscillators to generate their basic tones for shaping with filters, envelopes, etc, while sample players use digital audio recordings (samples) as their raw material. Sample player engines can be used to construct incredibly lifelike virtual pianos, guitars, drums, brass, strings, etc, as well

as classic synth emulations, sonically elaborate 'power synths' and even vocalists. These instruments are often referred to as ROMplers; short for 'ROM player', this name harks back to the early days of sample-based sound modules, which stored their fixed sound libraries in Read Only Memory (ROM).

Debate continues to rage as to whether virtual analogue synths can ever sound as good as their real-world counterparts, but we're absolutely unequivocal in our opinion that they already do - in fact, we'd always favour the price, convenience and stability of, say, a virtual Minimoog over the real thing, particularly given that recent emulations really couldn't be described as sounding in any way lacking.

Intel

The world's biggest manufacturer of microprocessors, Intel was founded in 1968 and now makes CPUs for the majority of Windows PCs, as well as all of Apple's desktop and notebook computers. They also produce graphics chips, hard disks, mobile CPUs and more, but it's their x86-based Mac/PC CPUs that form the core of their business.

Inter-app audio

Part of Apple's iOS 7, which is available now (although, at the time of writing, it's having some major audio issues), Inter-App Audio is a new library of APIs that enable apps running on iPhone, iPad or iPod touch to send and receive audio streams to and from each other.

Thus, a soft synth app could send its output to a DAW app, perhaps via an effects processing app, taking us a big step closer to the dream of the full-on mobile music studio. The majority of IAA's functionality actually already exists in the form of A Tasty Pixel's AudioBus app. Still, IAA promises to improve on AudioBus's feature set and obviously benefits from being part of the OS itself in terms of developer support and the user not having to invest in a third-party solution.

Interface

Any point at which a computer or software application connects to a peripheral device (which includes you, the user!) is an interface, and there are many types of interface involved in computer-based music production. An audio interface, for example, enables you to connect your DAW to your speakers for output, and microphones and external instruments (guitars, etc) for input; while a MIDI interface accepts input from and delivers output to MIDI devices of all kinds. Other more general computing interface types include USB, FireWire and the graphical realisations, analogies and metaphors through which you interact with your operating system, DAW and plugins (the Graphical User Interface, or GUI).

Also read: How do you choose an audio interface?

Intro

The opening section of a song, in which you'll generally either set out your stall for what's to come, or - less commonly - play with your listener's assumptions by creating something wholly at odds with the rest of the track. Like other song sections, the intro will usually be 4, 8, 16 or 32 bars long. It can also be repeated later in the track or revisited stylistically for the outro.

I/O

Input/Output. In digital music production, commonly used in reference to the combined input (signals entering) and output (signals exiting) capabilities of a device. If, for example, you see an audio interface described as sporting both analogue and digital I/O, it means that it's built with both analogue and digital inputs and outputs onboard, for sending and receiving signals to and from guitars, microphones, loudspeakers, etc, as well as digital devices such as DAT recorders and other audio interfaces.

iOS

The operating system behind Apple's iPhone, iPad and iPod touch, iOS is a surprisingly capable platform for music production thanks to its OS X roots (and the excellent CoreAudio and CoreMIDI APIs that come with it, as well as the new Inter-App Audio functionality - see above) and responsive touchscreen interface.

While it would be stretching the truth to suggest that you could cheerfully produce complete 'pro-quality' tracks from start to finish using iOS apps, that day is surely coming; and for MIDI control, live performance and light, on-the-go production, iOS (and, to a lesser extent, its less audio-centric rival Android) is a miracle of modern computing.

Perhaps surprisingly, Apple's only contributions to the iOS music scene have been Garageband and their recent Logic Remote controller for Logic Pro X. Still, both are absolutely excellent examples of a mobile DAW and MIDI controller respectively.

Iterative quantise

While 'regular' quantise snaps selected notes rigidly to a note-value-based rhythmic grid, iterative quantise - an option in a few DAWs, including Cubase, Nuendo and Renoise - lets you specify a percentage amount of movement towards said grid. So with a setting of 50%, off-grid notes will move halfway closer to the nearest grid division. It's useful for tightening up loose MIDI parts without making them sound overly robotic or programmed.

Computer Music magazine is the world’s best selling publication dedicated solely to making great music with your Mac or PC computer. Each issue it brings its lucky readers the best in cutting-edge tutorials, need-to-know, expert software reviews and even all the tools you actually need to make great music today, courtesy of our legendary CM Plugin Suite.