What does the future of computer music making look like?

We attempt to predict the future of music technology, with the help of a few brands at the cutting-edge

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

You are now subscribed

Your newsletter sign-up was successful

Music Tech Showcase 2021: Since computer-based music making became an accessible, realistic proposition in the 1990s, technology has come on in leaps and bounds. If the past few decades are anything to go by, we can expect a raft of revolutionary new technologies in the coming years allowing us to do things once thought impossible.

Sometimes, the future is just completely unknowable – though the next few baby steps towards tomorrow’s major innovations are already being taken. While the dominance of computer-based DAWs shows no major signs of being superseded, the architecture of computer music software itself has undergone radical transformations.

Clever clogs

Increasingly, over the last ten years, technologies such as artificial intelligence and forensic audio analysis have opened up our eyes to the potential of unlocking some of these former barriers.

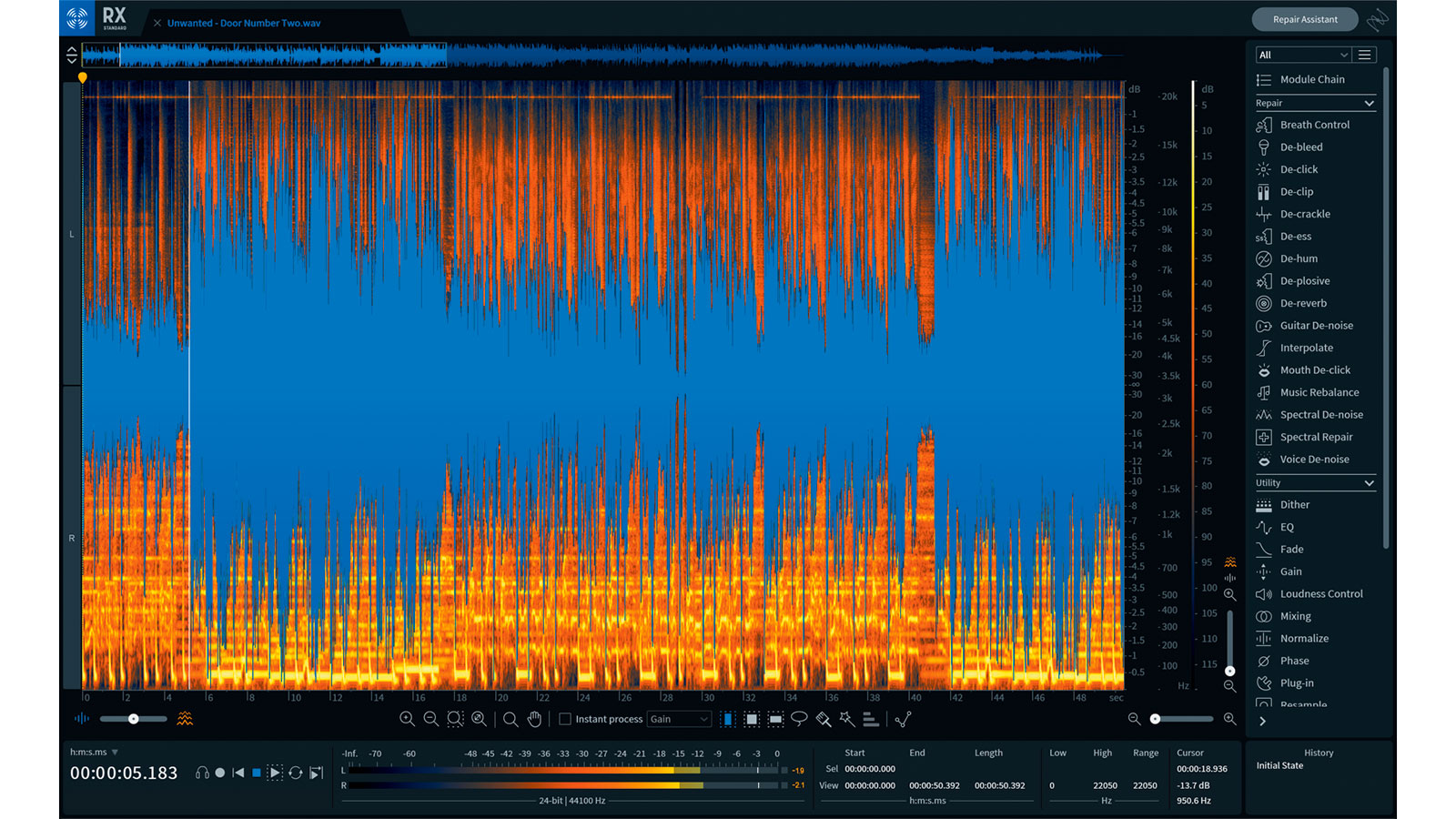

Article continues belowTake iZotope’s astounding breakthroughs with its RX audio suite, largely dependent on artificial intelligence – something that the 1990s bedroom producer regarded as science fiction.

“iZotope has always strived to bring audio tech into the future, to do the impossible, and to do it with intelligence – so we can make it easy for anyone, whether a beginner or a professional, to dial in the sound that they want and discover the sound they need,” the company tells us.

iZotope’s breakthroughs with the RX audio suite are largely dependent on artificial intelligence – something that the 1990s bedroom producer regarded as science fiction

We ask how iZotope incorporates machine learning, and what it means in this context?

“Machine learning refers to a class of algorithms that discover patterns in data and use those discovered patterns to make predictions when presented with new data.

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

“Some of the most familiar applications of machine learning are speech recognition (like Siri) or image recognition where algorithms automatically label items, places, and faces in images (like Facebook photo tag suggestions).

“What’s common about the machine learning applications mentioned above is that they label content but don’t process it — that is to say, a speech recognition system doesn’t modify your speech. Some of the modules in RX-Dialogue Isolate and De-rustle, for example, are actually processing audio using an algorithm trained with machine learning.

“To process audio in RX, our algorithm needs to make a decision about the amount of dialogue present in each pixel of the spectrogram, which corresponds to over 100,000 decisions per second of audio!”

It’s hugely impressive but before we get ahead of ourselves and start envisaging a world of algorithmically-led software, iZotope’s main aim is to correct issues with damaged or coarse audio, and stress that these routines are wholly based on the expertise of a team of staff, to train the algorithms over time.

Rebuild not bolt-on

It’s a similar story with the company Hit ’n’ Mix, whose RipX technology takes a hard turn away from the traditional DAW ecosystem. Their two modules, DeepRemix and DeepAudio, allow users to create stems from bounced audio files, and get deep with audio post production respectively.

“Much age-old software has additional features bolted on, seemingly without regard to the overall effect this might have on the usability of the software”

Jeremy Lloyd - Hit ’n’ Mix

“To produce technology and tools that are truly unique, I find it important to maintain a distance from existing audio software, and in fact hadn’t opened a DAW until recently,” Jeremy Lloyd tells us.

“It’s crucial to focus on getting the core of the technology and UI as simple and perfect as possible. This means thinking about virtually every eventuality, now and in the future, that is likely to be expected of it.

“Much age-old software has additional features bolted on, seemingly without regard to the overall effect this might have on the usability of the software, or how a newcomer is supposed to learn all the tools on offer. I believe we are in a strong position to keep RipX powerful, yet pure and approachable, for years to come.”

We’re certainly keeping an eye on it, as well as numerous other disruptive new approaches across the software spectrum.

Tomorrow’s world

If the last few decades have proven anything, it’s that we can’t really predict what’s to come for home producers – so many micro steps, cultural trends and attitudes have led to the current computer music paradigm.

A few excitingly certain things, however, are...

- That the increasing speed of both processing power and internet connections will allow far greater opportunities for live global collaboration

- That developments in artificial intelligence will open even more (previously obstructed) creative pathways

- That the increasingly blurred distinctions between mobile devices and computers will become unimportant, as we move away from hard drive storage, and instead come to rely on humongous cloud drives

Fundamentally however, that age-old giddy thrill of making, recording and producing a brand new track will be there in 2050 as it is today.

A token gesture

While musicians and producers around the world bemoan the lack of remuneration that’s generally provided in the streaming world, the rise of NFTs (non-fungible tokens) potentially opens up new financial opportunities for creatives of all stripes.

NFTs are essentially digital tokens of ownership for certain pieces of artwork (including tracks and albums) with ownership certified on a virtual ledger, called the blockchain.

Using cryptocurrency, fans can bid to own the ‘original’ version of a digitally released track or album, despite the fact that the music can still be copied.

Though it’s a little bit of a rich kid’s playing field right now, the concept of artists making revenue from fans who bid to own NFTs of original tracks is actually a sound idea – it could lead to a more democratic and financially healthier music industry.

Computer Music magazine is the world’s best selling publication dedicated solely to making great music with your Mac or PC computer. Each issue it brings its lucky readers the best in cutting-edge tutorials, need-to-know, expert software reviews and even all the tools you actually need to make great music today, courtesy of our legendary CM Plugin Suite.