Hands-on: IK Multimedia iRing

We test this new iOS motion controller system

Being probably the world's most prolific manufacturer/developer of audio and MIDI apps and hardware for iPad, iPhone and iPod touch, it comes as no great surprise to see IK Multimedia make it to market with the first genuinely capable camera-driven motion-control system for iOS-happy musicians and producers.

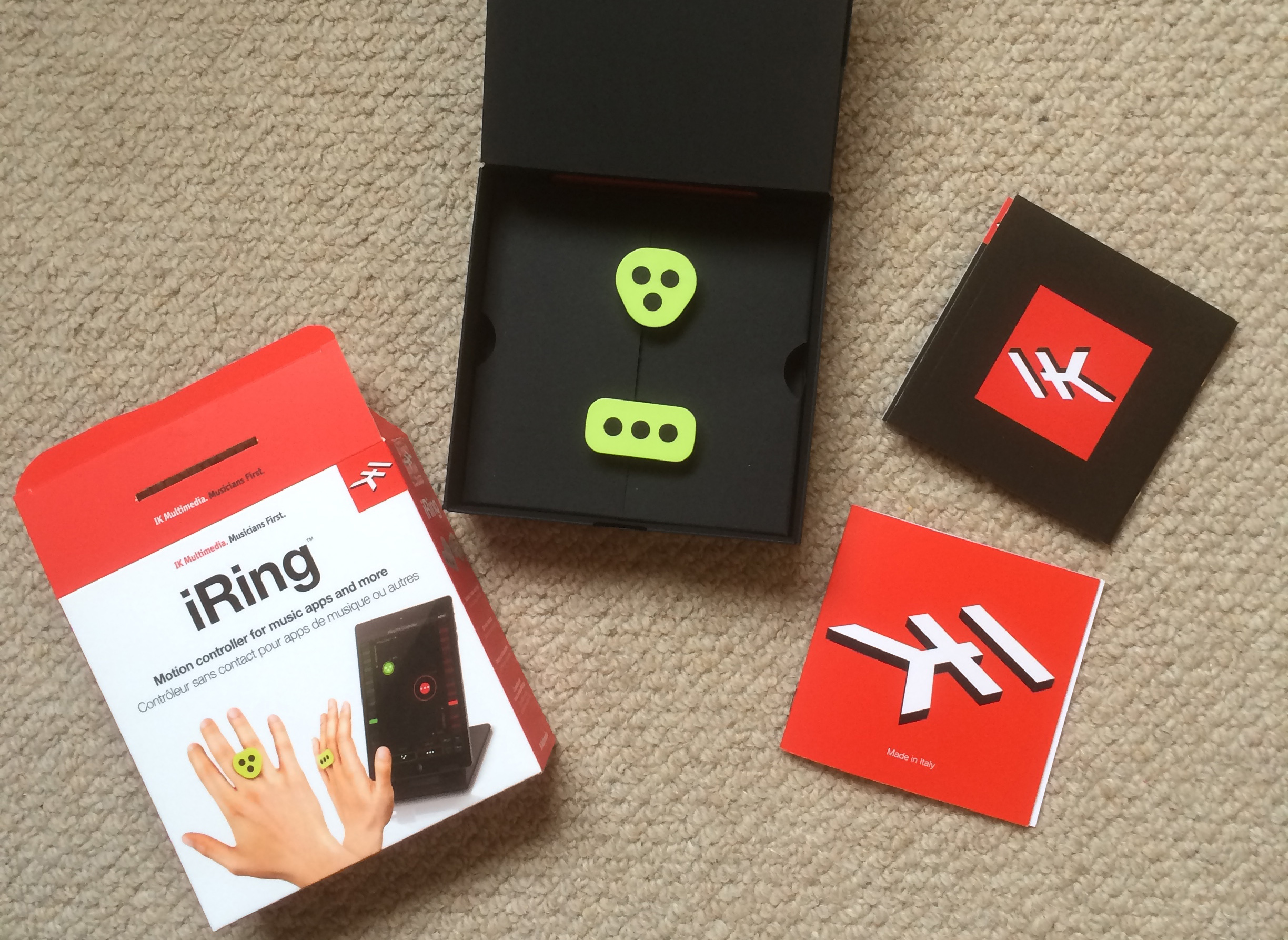

The £20 (inc VAT)/€20 (ex VAT) iRing package (which is shipping from today) comprises a pair of identical white, grey or green plastic 'rings' that are actually just hourglass-shaped frames, held (effortlessly, it must be said) between two fingers rather than mounted on one.

A panel on one side of each iRing features three black dots engraved in a triangular configuration, while another panel on the opposite side hosts three dots arranged in a row.

The idea is that you wear an iRing on each hand - one with the triangular dots facing out, the other the linear dots - and wave them about in front of your iDevice camera (front or back), whereupon their movement in 3D space is tracked using "advanced volumetric positioning algorithms" and fed to your iRing-compatible app, which sees them as two separate controllers.

One iRing to rule them all

Right now, iRing is compatible with four apps, all of which are available for free on the App Store: GrooveMaker 2 Free, iRing Music Maker, DJ Rig Free for iPad and iRing FX/Controller. For this hands-on, we're only looking at the last of these, which is the one that we're guessing IK hopes will truly sell the system to musicians (the first two are essentially prefab 'groove palette' apps of limited creative utility, and the third is for amateur DJs rather than producers).

iRing FX/Controller serves two roles. The first is as an AudioBus- and Inter-App Audio-compatible multi-effects processor through which you can route your other music apps or an external input and treat them to motion/gesture-controlled filter, phasing, delay, chorus, etc. This is mildly useful for live performance and certainly fun, but not really any reason for the serious muso to get involved.

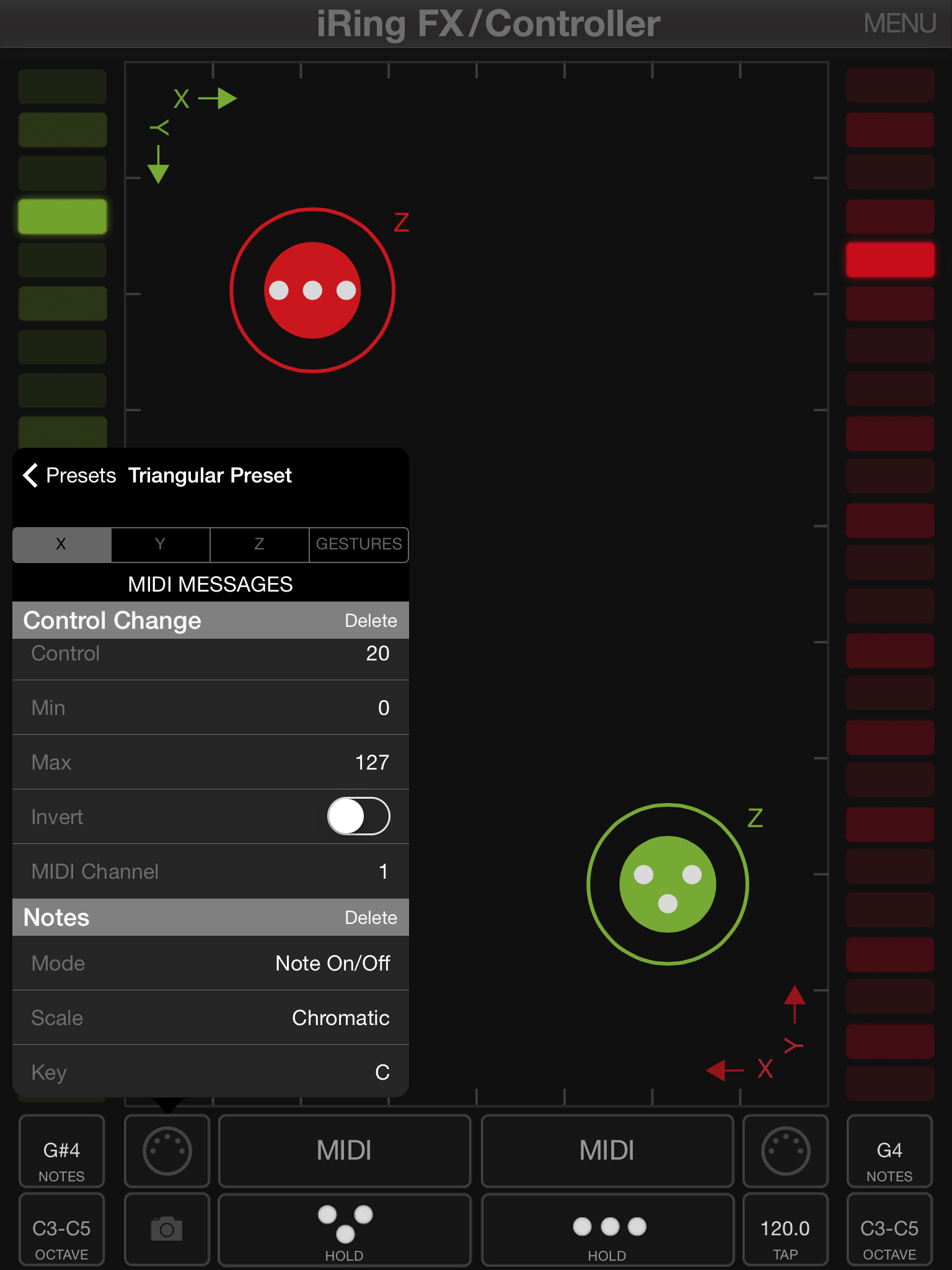

In its other guise, however, the app turns iRing into a deeply-configurable MIDI controller for manipulating MIDI-compatible apps on your iOS device, as well as DAWs, plugins and standalone instruments and effects on your Mac, via Virtual MIDI, Wi-Fi or a physical MIDI interface.

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

Within the app, you can assign MIDI CC, note, pitchbend, Program Change, Aftertouch and MMC messages to all three movement axes for each ring - left/right (X), up/down (Y) and forward/back (Z) - as well as a good range of gestures: Rotate Left and Right, Punch, Exit (leaving the camera's line of sight in all four directions), and Show/Hide. Each axis and gesture can send as many simultaneous control signals as you like, and each signal is fully user-definable - MIDI channel, MIDI CC number, value/note range (scale, key and octave spread for the latter), inversion, etc.

You can save any number of setups as presets for recall later, and the GUI shows all your movements in real time (green and red 'pucks' representing the triangular and linear iRings respectively), with or without the actual camera feed (ie, your face and hands) visible as a backdrop.

It's every bit as straightforward yet flexible as it sounds, and in our testing, using Ableton Live, we had a great time flinging all manner of instrument, effect, clip and mixer parameters around… eventually.

"In our testing, using Ableton Live, we had a great time flinging all manner of instrument, effect, clip and mixer parameters around… eventually."

You see, at the time of writing there's something of an issue with iRing FX/Controller in that assigning the MIDI CCs generated on the X, Y and Z axes using MIDI learn is a truly maddening process. Even the slightest movement in any direction is registered and learnt by the target parameter, so preventing your DAW or plugin from reading an X movement when you want it to acknowledge a Z movement, for example, can be nigh on impossible.

Happily, we can report that, when we pointed this out to IK, it vowed to fix it in an upcoming update by including a mute button in the assignment editor layout, enabling each one be turned off temporarily.

Anyway, none of this is an issue with apps and plugins that offer manual assignment, of course, but you might be surprised at how few actually do that these days - Live doesn't, to give but one high-profile example.

Also, beyond a certain speed of hand movement, the controller output on the X and Y axes jumps instantly from start to end value, rather than gliding quickly. We suspect this is a limitation of the camera rather than the app, and below this fairly high speed threshold the output response is perfectly smooth, although the movement of the graphical pucks can be quite 'sticky', even at moderate acceleration.

I thee wed?

In the couple of hours we've spent messing around with iRing so far, we're more impressed than we expected to be - it has the potential to be an exciting step forward not only for iOS-based music production but more serious MIDI control, too. It responds as well to movements and gestures as we could hope any camera-driven system to, and while it does look a little… theremin, the feeling you get from controlling, say, a synth's filter cutoff, resonance and delay depth with one hand, while actually playing it with the other can only be described as quasi-magical.

The fast movement thing and GUI lag bother us a bit, and we're eager to see that MIDI learn assignment fix applied, but as it stands, iRing appears to work as advertised and feels like it's pitched at a fair price.

A music and technology journalist of over 30 years professional experience, Ronan Macdonald began his career on UK drummer’s bible, Rhythm, before moving to the world’s leading music software magazine, Computer Music, of which he was editor for over a decade. He’s also written for many other titles, including Future Music, Guitarist, The Mix, Hip-Hop Connection and Mac Format; written and edited several books, including the first edition of Billboard’s Home Recording Handbook and Mixing For Computer Musicians; and worked as an editorial consultant and media producer for a broad range of music technology companies.