A brief history of vocal effects

Tracing the lineage of voice processing, from Voder to Melodyne

Individuals have unsuccessfully attempted to artificially manipulate and simulate the human voice for hundreds of years, but the development of electronics in the 20th century saw Homer Dudley of Bell Labs first showcase his electronic speech synthesiser to critical acclaim at New York's World Fair in 1939.

Named 'The Voder' (short for Voice Operating Demonstrator), Dudley's huge device comprised an oscillator, a noise generator and a band-pass filter array. It was a complex machine to use, requiring skilled operation by a trained professional, but it produced intelligible speech and singing.

Dudley's other invention was designed to reduce the bandwidth of speech: the 'Voice Encoder', shortened to 'Vocoder', analysed the spectral makeup of the human voice, and these analysis signals were then used to modulate a synth, resulting in a synthesised tone that appeared to talk.

Dudley's devices were designed for bandwidth reduction and wartime encryption, but as electronics became more affordable, musicians realised the vocoder's potential as a futuristic performance instrument. The user could simultaneously speak into a microphone and play the keyboard, and the vocoder would turn the speech into robotic 'singing'.

Experimental musicians from the German Elektronische Musik movement and the BBC Radiophonic Workshop integrated the vocoder into their compositions and, after researching Dudley's designs, Robert Moog and Wendy Carlos created their own vocoder, with the effect appearing on the seminal soundtrack to Stanley Kubrick's A Clockwork Orange.

German electronic pioneers Kraftwerk used the vocoder on their 1974 album Autobahn, subsequently influencing a new generation of electronic musicians such as Herbie Hancock, Afrika Bambaataa and many other artists throughout the '70s and '80s.

Synthesiser manufacturers, recognising the futuristic instrument's commercial potential, released synths with incorporated vocoders, and standalone models: examples include the EMS 5000, Korg VC-10 and Roland VP-330.

Want all the hottest music and gear news, reviews, deals, features and more, direct to your inbox? Sign up here.

Skip forward to today, and we're used to hearing the likes of Daft Punk use the vocoder to personify their 'singing robot' image, musically fusing voice and synth - although the French act often use a talkbox, a physical box that the user speaks into via a tube to create vocoder-like effects.

Pitch processing

Eventide's H910 Harmonizer, released in 1975, was the first (relatively) affordable digital pitchshifter: vocals could be tuned up or down over several octaves in real time, used to generate primitive vocal harmonies from a single lead vocal or to create transposition effects for film and TV sound design.

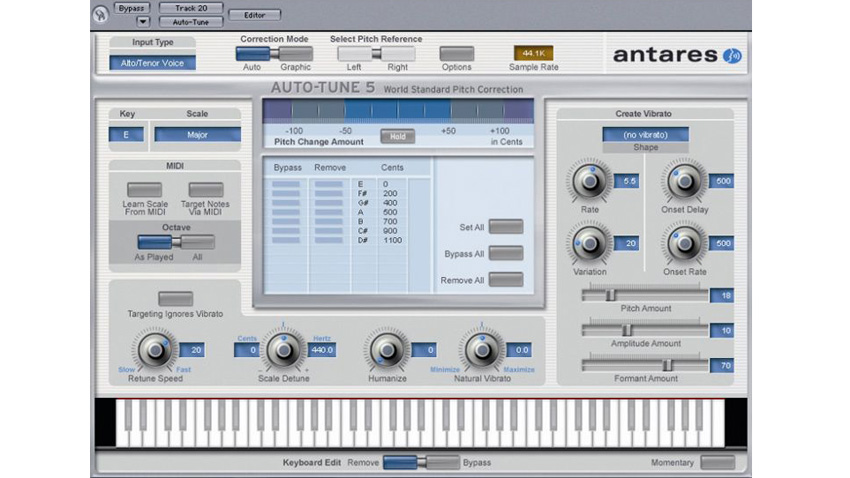

The '90s saw the introduction of the now infamous Auto-Tune. Antares' pitch correction software was designed to automatically align a singer's intonation to the nearest whole semitone, gently correcting tuning errors - but, like many audio processes, it wasn't long before it was abused for creative gains.

Heavy-handed application locks a vocal to the nearest semitone too quickly, regimenting a vocalist's pitch to the point of unnatural, robotic perfection. Often mistaken for a vocoder effect, the synthetic Auto-Tune warbling was popularised by Cher's 1998 hit, Believe, and has since been used (arguably overused) by artists such as Kanye West, T-Pain and Will.i.am.

Nowadays, programs such as Celemony's Melodyne facilitate note-accurate pitch processing, including the dissection of polyphonic material.

For more on vocal effects and vocoding, pick up Future Music 296, which is on sale now.

Future Music is the number one magazine for today's producers. Packed with technique and technology we'll help you make great new music. All-access artist interviews, in-depth gear reviews, essential production tutorials and much more. Every marvellous monthly edition features reliable reviews of the latest and greatest hardware and software technology and techniques, unparalleled advice, in-depth interviews, sensational free samples and so much more to improve the experience and outcome of your music-making.